A structured internal link map increases crawl efficiency by up to 40%, ensuring LLMs accurately index your core features and prioritize your SaaS in AI-generated answers to drive higher-intent organic signups.

Modern search is no longer just about a bot following a trail of breadcrumbs to a URL; it’s about an AI agent trying to build a mental model of what your software actually does. If your internal links are a tangled web of “click here” and “read more,” you’re essentially leaving the AI to guess your product’s value. To dominate in a world of Generative Engine Optimization (GEO), we have to stop building silos and start building a knowledge graph.

Before we dive into the “how-to,” let’s look at how AI-ready mapping differs from the old-school SEO approach:

The Shift: Traditional SEO vs. AI-First Semantic Mapping

| Feature | Traditional SEO Silos | AI-First Semantic Mapping |

| Primary Goal | PageRank distribution & keyword density. | Entity relationship & context extraction. |

| Link Anchor | Exact match keywords (e.g., “SaaS tool”). | Descriptive semantic triples (e.g., “Tool X automates Y”). |

| Structure | Linear folders (/blog/topic). | Hub-and-spoke with multi-directional nodes. |

| LLM Impact | Helps with indexing pages. | Drives mentions in AI-generated “best of” answers. |

| Data Signal | Click-through rate (CTR). | Semantic relevance and entity authority signals. |

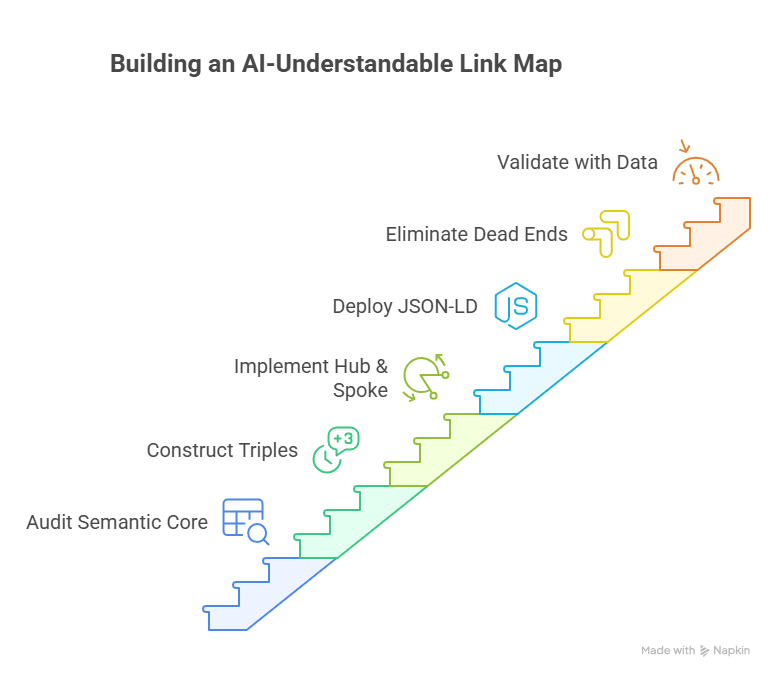

Step 1: Audit Your Semantic Core and Entity Nodes

To build a map that a machine understands, you first need to define your “Nodes”, the fixed points of authority on your site. In a SaaS context, these aren’t just “pages”; they are Entities.

Think of your “Product Analytics” page not as a URL, but as a primary entity. Your “Attribution Modeling” feature is a sub-entity. Your blog post about “Reducing Churn” is a supporting node.

The Implementation Goal:

Identify your top 5 “Money Entities” (the pages that drive MRR) and your 20 “Supporting Nodes” (the content that proves you know your stuff).

- Actionable Task: Create a simple spreadsheet. Column A is your Entity (e.g., Multi-touch Attribution). Column B is the Predicate (what it does, e.g., calculates). Column C is the Object (the result, e.g., ROI/CAC ratio).

When you audit this way, you stop linking randomly. You start linking because Multi-touch Attribution (Entity A) is the solution to Inaccurate Data (Node B). This creates a logical flow that an LLM like Gemini can parse as: “Company X provides a solution for Y by doing Z.”

Also read: What a Revenue-Driven SaaS Content Strategy Actually Looks Like

Step 2: Construct Semantic Triples within Anchor Sentences

Semantic triples in anchor text turn vague links into machine-readable facts, allowing AI to extract your product’s core value propositions directly from your site’s architecture.

If you want an LLM to recommend your software, you have to stop using “empty” anchor text. Phrases like “click here,” “this feature,” or even “best SaaS tool” carry zero semantic weight. To an AI, these are missed opportunities to define a relationship. Instead, we use Semantic Triples: a linguistic structure consisting of a Subject, a Predicate (an action or relationship), and an Object.

The Anatomy of a High-Authority Link

- Instruct the writer to wrap every internal link in a sentence that explicitly defines the relationship between the two pages to satisfy AI fact-extraction patterns.

When you link from a blog post about “Reducing Churn” to your “Product Analytics Dashboard,” the sentence should look like this: “Our product analytics dashboard [Subject] identifies [Predicate] high-risk churn patterns [Object].”

By doing this, you aren’t just sending a user to a new page; you are feeding the LLM a verifiable fact it can use to answer a prompt like, “Which tool helps me identify churn patterns?”

Moving from “Keywords” to “Claims”

- Detail the transition from hyperlinking a single keyword to hyperlinking a descriptive phrase that contains a functional claim or data-backed insight.

In the PLG (Product-Led Growth) world, your links should act as micro-conversions. Instead of linking the word “attribution,” link the phrase “automated multi-touch attribution for B2B SaaS.” This provides the AI with the specific niche and utility of your page, moving it up the “knowledge graph” for that specific entity.

Also read: How to Measure SaaS Content ROI Beyond Traffic

Step 3: Implement “High-Intent” Hub and Spoke Architecture

A high-intent hub and spoke architecture concentrates topical authority into “Money Pages,” ensuring that both users and LLMs recognize your core features as the definitive solution for specific SaaS problems.

In a traditional blog setup, content often lives in a flat hierarchy where every post has equal weight. For an AI, this creates “semantic noise”; it can’t tell which page is your primary authority on a topic. To fix this, we use the Hub and Spoke model. The “Hub” is your comprehensive feature or category page, and the “Spokes” are supporting blog posts, case studies, and documentation that link back to that Hub with consistent semantic signals.

Building Your Authority Hubs

- Instruct the writer to design Hub pages as “comprehensive entity definitions” that summarize a broad topic (e.g., “SaaS Retention Strategies”) and link out to specific, detailed spokes.

Your Hub page shouldn’t just be a landing page; it should be a “Glossary of Truth” for your niche. For example, if you are an attribution tool like Usermaven, your Hub page for “Marketing Attribution” should define every major model (First-touch, Last-touch, Linear). This signals to an AI that this URL is the “Root Node” for that entire subject.

Connecting Spokes to Conversion Nodes

- Explain how to link “Problem-Aware” spokes (e.g., a blog post on “Why is my CAC so high?”) to “Solution-Aware” hubs using high-intent bridge sentences.

The goal of your spokes is to validate the user’s problem and then point them toward the Hub as the resolution. Every internal link from a spoke to a hub should answer a “How” or “Why” question. For instance: “Implementing a multi-touch attribution model [Link to Hub] recovers [Predicate] lost conversion data [Object] from fragmented customer journeys.”

Also read: The Complete Guide to SaaS Content Marketing in the AI Era

Step 4: Deploy JSON-LD Breadcrumbs for Machine-Readable Hierarchy

JSON-LD breadcrumb schema provides a structured data layer that mirrors your internal link map, giving AI agents a verified, machine-readable map of your SaaS site’s topical depth and authority.

While internal links provide the “connective tissue” within your content, JSON-LD (JavaScript Object Notation for Linked Data) acts as the “DNA” of your site structure. It tells LLMs exactly where a page sits in your ecosystem without requiring them to infer it from visual cues. For a SaaS company, this is critical because it ensures your “Feature” pages aren’t just seen as random subfolders, but as high-level solutions to specific industry problems.

Translating Visual Links into Schema Code

- Instruct the writer to ensure the breadcrumb trail reflects the “Hub and Spoke” logic, moving from the Home Entity to the Category Hub and finally to the specific Spoke or Feature page.

Don’t settle for simple “Home > Blog > Title” paths. Your breadcrumbs should reinforce your entity nodes. For example, if you’re writing about attribution, your JSON-LD should map a path like: Home > Attribution Solutions > Multi-Touch Attribution > [Current Article]. This explicit hierarchy allows an LLM to “cluster” your content correctly, associating your brand with the broader parent category.

Validating Hierarchy for “Rich Snippet” and AI Citation

- Detail how a clean breadcrumb schema increases the likelihood of your internal link structure appearing in Google’s Search Generative Experience (SGE) and ChatGPT’s browsing citations.

When an AI agent searches for a solution, it looks for sites that show organized expertise. A well-implemented breadcrumb schema proves that your content isn’t a series of isolated posts, but a comprehensive knowledge base. If the schema matches the actual internal link map you’ve built in Step 3, the “confidence score” the AI assigns to your site’s data increases significantly.

Also read: Is Your SaaS Website AI-Ready? A 20-Point LLM Ingestion Checklist

Step 5: Eliminate Semantic Dead Ends and Orphaned Nodes

Eliminating “orphaned” pages ensures that every piece of content contributes to your site’s overall authority, preventing AI crawlers from losing track of high-value product information.

In many SaaS blogs, articles are published and then forgotten, eventually becoming “orphaned”, meaning no other page on your site links to them. To an AI, an orphaned page is a low-authority island. If it’s not connected to your core “nodes,” the LLM assumes the information is outdated or irrelevant to your primary business. To build a map the AI trusts, every page must lead somewhere, and every “money page” must be reachable within 2-3 hops.

Auditing for “Leaky” Content Funnels

- Instruct the writer to use a site crawler to identify pages with zero incoming internal links and pages that fail to link back to a conversion-focused “Hub.”

A “semantic dead end” occurs when a user (or a bot) reaches a high-quality blog post that doesn’t provide a clear next step. If your post on “Reducing Churn” doesn’t link to your “Retention Analytics” feature, you’ve broken the chain of logic. For an AI, this means it cannot definitively link your expertise (the blog) to your solution (the software).

Re-linking “Legacy” Assets to Current Growth Nodes

- Detail a workflow for updating older, high-traffic posts with fresh internal links that point toward new feature launches or updated product-led discovery paths.

You likely have “legacy” posts that still get organic traffic but link to outdated versions of your product. By performing a “Semantic Bridge Audit,” you can reconnect these high-authority pages to your current MRR-driving hubs. This “wakes up” the old content, signaling to the AI that your knowledge graph is active, maintained, and authoritative.

Also read: How to Write Extractable Answers That LLMs Cite

Step 6: Validate with AI-Specific Attribution and Crawl Data

Validating your link map with specific attribution data allows you to prove that your semantic architecture is actually influencing AI-generated answers and driving product-led growth.

The final step isn’t just “setting and forgetting” your links; it’s confirming that AI agents are actually traversing the paths you’ve built. In a traditional SEO world, we look at “PageRank.” In a GEO (Generative Engine Optimization) world, we look at Entity Citations. You want to see if your “Hub” pages are being cited by LLMs as the primary source for technical definitions or solution recommendations.

Monitoring Crawl Efficiency and Node Discovery

- Instruct the writer to use tools like Google Search Console to monitor “Crawl Stats,” looking for an increase in the frequency with which bots visit your high-priority “Money Entities.”

If your internal link map is working, you will see a “clustering” effect in your crawl data. Instead of bots hitting random blog posts, you should see them moving systematically from Spokes to Hubs. If your “Product Analytics” hub isn’t being crawled at least once a week, your internal link weight is likely too diluted.

Using Attribution Tools to Track “Assisted Conversions”

- Detail how to use an attribution platform like Usermaven to see if users who eventually sign up are following the “semantic paths” you laid out in your internal link map.

This is where the ROI becomes clear. By tracking the “content path” of a user, you can see if they landed on a “Problem-Aware” spoke and used your semantic bridge to reach a “Solution-Aware” feature page. If the data shows users jumping from a blog post to a signup after clicking your “Semantic Triple” link, you’ve successfully aligned your site for both humans and AI.

Why Scaling SaaS Companies Partner with SaaS Leady

Building a semantic map is technically demanding and requires a deep understanding of how LLMs interpret site architecture. At SaaS Leady, we specialize in Generative Engine Optimization (GEO), helping B2B SaaS brands move beyond traditional SEO to become the primary recommendation in AI-driven search results.

We don’t just “build links”, we architect authority. Whether you’re looking to turn your blog into a signup machine (like our work scaling Replug to 125k monthly visits) or you need to ensure your product features are the first things cited by ChatGPT and Gemini, we provide the strategy and execution to make it happen.

- Entity Mapping: We define your product nodes so AI agents can’t ignore them.

- Semantic Content: We produce human-first content optimized for machine extraction.

- Authority Building: High-quality link strategies designed for both Google and LLMs.

FAQ: Building Internal Link Maps for the AI Era

Does internal linking still matter if most traffic comes from AI answers?

Yes, even more so. AI models like Perplexity and Gemini “browse” the web to find citations. If your internal links don’t clearly define your product’s utility through semantic triples, the AI will likely cite a competitor who has a more organized knowledge graph.

How many internal links should I have per 1,000 words?

Quality outweighs quantity. Instead of a specific number, focus on Relevance Density. Ensure you have at least 3-5 high-intent links that follow the “Subject-Predicate-Object” model, pointing directly toward your relevant feature hubs.

Can I automate this process with plugins?

While plugins can suggest links based on keywords, they often lack the “strategic intent” required for AI understanding. Automated tools rarely create the nuanced semantic triples needed to explain why two pages are related. A manual or semi-automated approach guided by an entity map is far more effective for GEO.

How long does it take for LLMs to recognize a new link map?

Unlike Google, which might update rankings in weeks, LLMs may take longer to “retrain” or “refresh” their understanding of your site unless you use tools like IndexNow or updated JSON-LD schema to signal immediate structural changes. Typically, you’ll see shifts in AI citations within 4-8 weeks of a full semantic re-mapping.