The SaaS content landscape has officially shifted: organic success is no longer measured by “blue link” clicks, but by your brand’s presence as a cited source in AI-generated answers, directly dictating your share of the B2B buyer’s journey.

Traditional SEO vs. GEO (Generative Engine Optimization)

If your 2026 growth plan still relies on the 2022 “Skyscraper Technique,” you’re essentially ghostwriting for your competitors’ AI agents.

Traditional SEO was a game of volume and backlinks; you wrote 3,000 words on “Project Management Software,” optimized for a specific keyword density, and hoped to land in the top three results. Today, buyers are skipping the search results page entirely. They’re asking Perplexity, “Which project management tool integrates best with Slack and supports agile sprints for a team of 50?”

In this world, Generative Engine Optimization (GEO) takes the lead. While Traditional SEO focuses on discovery through keywords, LLM SEO focuses on authority through consensus.

- Traditional SEO is built for the click: It prioritizes CTR (Click-Through Rate) and dwell time.

- GEO is built for the citation: It prioritizes being the “factual anchor” the LLM uses to build its response.

For SaaS teams, this means shifting your budget from high-volume “What is…” top-of-funnel fluff to high-authority technical documentation and original research. If an AI model cannot verify your claims across multiple “authority signals”, like your technical docs, case studies, and third-party reviews, you simply won’t exist in the generated answer.

Should You Pivot Your Entire Content Budget to AI-First?

If your CMO is asking for the ROI of “optimizing for ChatGPT,” they’re looking for a defensive strategy against the 60% of searches that now end without a single click to a website. The decision isn’t about abandoning Google; it’s about reallocating the 20-40% of your budget currently wasted on “thin” informational keywords toward high-authority assets that AI models actually cite.

While traditional SEO focuses on the volume of the funnel, AI-first content (GEO) focuses on the velocity of the funnel. Data from 2025-2026 indicates that while AI search might drive fewer total sessions, the visitors who do click through from a citation convert at a rate 4.4x higher than traditional organic search. They arrive “pre-educated” by the LLM, having already bypassed the initial awareness hurdles.

Legacy SEO vs. AI-First (GEO) Comparison

| Metric | Traditional SEO (Legacy) | AI-First (GEO) |

| Primary Goal | Rank #1 for high-volume keywords | Earn citations in LLM responses |

| User Intent | Browsing / Informational | Decision-making / Tool selection |

| Typical ROI | ~700% over 3 years (volume-based) | 6x higher conversion rates (intent-based) |

| Funnel Impact | Wide TOFU awareness | Accelerated MOFU/BOFU |

| Content Type | 3,000-word “Skyscraper” posts | Original research, technical docs, and FAQs |

For early-stage SaaS teams, the “at zero” starting point is actually an advantage. You aren’t burdened by thousands of legacy pages that require auditing. Instead, you can anchor your strategy in original research, the single most cited content type by LLMs. By publishing unique data (e.g., “The 2026 Benchmark for PLG Churn Rates”), you provide the “ground truth” that AI agents need to answer user queries, effectively turning the LLM into a high-intent referral engine.

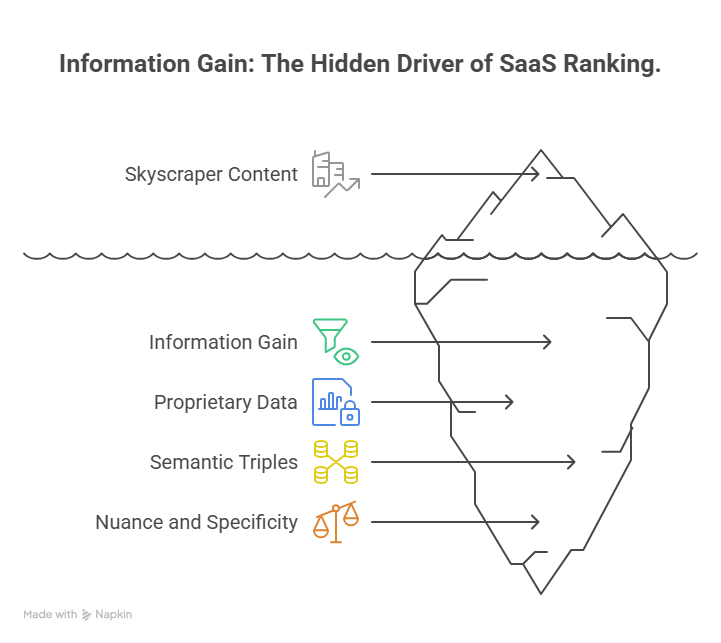

The Rise of “Information Gain” as the Primary SaaS Ranking Factor

In the era of AI search, “Skyscraper” content is no longer the gold standard; it’s a liability. If your article is just a better-formatted version of what already exists on the web, an LLM has zero reason to cite you. Why would it? It already “knows” that information from its training data.

To win now, you need Information Gain. This is the measurable amount of new information a piece of content provides compared to what the AI has already seen. For a SaaS brand, information gain is your “moat.” If you aren’t providing a unique dataset, a contrarian take based on founder experience, or a highly specific technical breakdown, you’re just background noise.

Using Proprietary Data to Anchor Entity Recognition

AI models are obsessed with “facts” they can verify. When you publish proprietary data, like how your specific user base navigates a certain workflow, you aren’t just writing a blog post; you’re creating a knowledge anchor.

By framing your insights as “semantic triples” (Subject-Predicate-Object), you make it easy for an LLM to extract your data as a factual truth.

- Example: “Automated lead scoring reduces SDR follow-up time by 40%.”

- Why it works: This is a clean, extractable fact that an AI can use to answer a user’s question about the ROI of sales automation.

Why Generic “What is [X]?” Content is Officially Dead

The “What is SaaS?” or “What is CRM?” articles are now the exclusive domain of the LLMs themselves. If a user asks a search engine a definition-based question, the AI provides the answer directly on the results page. There is no click. There is no lead.

For SaaS teams, this means shifting focus from Definitions to Nuance.

- Don’t write: “What is Product-Led Growth?”

- Do write: “Why PLG fails for Enterprise SaaS with a $50k+ ACV.”

The second headline provides “Information Gain.” It offers a specific constraint, a targeted audience, and a nuanced perspective that an AI cannot hallucinate based on general training data.

Adapting Your SaaS Content Funnel for LLM Discovery

The traditional SaaS funnel, moving a lead from a broad “how-to” blog post to a whitepaper and then a demo, is being compressed. In the AI-search era, the “Discovery” and “Consideration” phases often happen simultaneously inside a single chat interface.

Buyers are no longer searching for “best marketing automation tools”; they are prompting, “Compare HubSpot and Marketo for a 200-person B2B team with a focus on multi-touch attribution.” To survive this, your content must be structured as a technical resource that an AI can parse and present as a solution.

Optimizing Technical Documentation for LLM Crawlers

In the past, technical docs and API references were buried in a subdomain for existing users. Now, they are your most potent sales tools. AI models like Gemini and Perplexity prioritize “ground truth” documents, structured, factual, and hierarchy-heavy pages, to answer complex “Can it do X?” queries.

- The Fix: Ensure your integration guides and feature documentation are crawlable and use schema markup. If an LLM can’t find your “Salesforce Integration” technical spec, it will tell a potential buyer that you don’t support it.

- The Goal: Turn your product’s “How-to” into the AI’s “Here’s how they do it.”

Shifting from Persona-Based to Intent-Based Content Clusters

We’ve spent a decade building content for “Marketing Mary” or “Developer Dave.” But AI doesn’t search by persona; it searches by intent and constraints.

Instead of broad persona guides, build content clusters around specific Jobs-to-be-Done (JTBD).

- Old Way: “The Ultimate Guide to CRM for Marketing Managers.”

- New Way: “Mapping Custom CRM Fields for Complex SQL Attribution.”

The latter is highly specific. When a user asks an AI how to solve a specific attribution problem, your content is the precise “key” that fits that “lock.” By clustering your content around these technical outcomes, you create a web of authority that forces the LLM to recognize your product as the specialized leader in that niche.

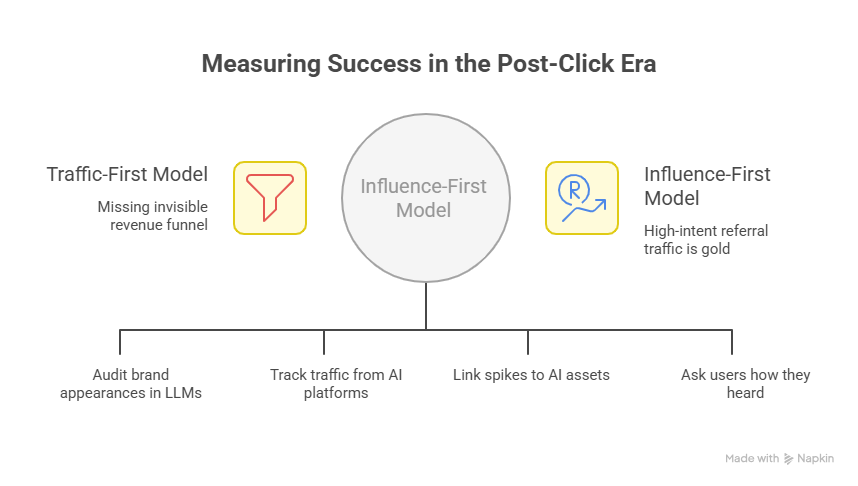

Measuring Success in the Post-Click Era

The hardest pill for SaaS marketers to swallow in 2026 is that a “successful” content piece might result in zero clicks to your website, and still be the reason you closed a $50k deal. When an AI agent answers a query by citing your brand and recommending your software, the “click” happens in the user’s mind, not on the SERP.

We are moving from a Traffic-First model to an Influence-First model. If your dashboard only tracks sessions and bounce rates, you are missing the invisible funnel where your revenue is actually being generated.

Moving Beyond “Vanity Sessions” to “Brand Citations”

If an LLM recommends your product in a “Top 10” list but the user doesn’t click, traditional Google Analytics marks that as a failure. In reality, that citation is a massive win for Brand Share of Voice (SOV).

To measure this, modern SaaS teams are tracking:

- AI Mentions/Citations: Using tools to audit how often your brand appears in LLM responses for primary “Category + Intent” queries.

- Referral Quality from AI: Monitoring traffic from perplexity.ai, chatgpt.com, or gemini.google.com. While the volume is lower, the conversion-to-signup rate is often 3x to 5x higher than standard organic traffic.

Attribution in a “Zero-Click” World

Since the journey often happens inside a chat interface, “Direct” traffic is becoming the new “Organic.” Users see your brand in a Gemini response, then type your URL directly into their browser or search for your brand name specifically.

- The Metric: Correlate spikes in “Direct” traffic and “Branded Search” with the publication of your high-authority, AI-optimized assets.

- The Framework: Use self-reported attribution (“How did you hear about us?”) on your signup forms. You’ll increasingly see: “I asked ChatGPT for a tool that does X, and it suggested you.”

The Reality Check: High organic traffic used to be the goal. In 2026, high-intent referral traffic from AI engines is the gold standard for MRR growth.

Why Most SaaS Teams Fail at GEO (and How We Bridge It)

Understanding how AI search works is one thing; re-engineering your entire content engine to feed the models is another. Most teams get stuck in “analysis paralysis”; they know they need information gain and semantic triples, but they’re still stuck in the cycle of producing high-volume, low-value blog posts that the AI simply ignores.

This is where we step in. At SaaS Leady, we don’t just “write articles.” We build high-authority assets designed to be the primary source for the next generation of search.

While others are using AI to churn out generic content that kills their brand authority, we use a human-led, data-first approach to ensure you become the consensus answer in your niche.

We help SaaS founders and marketing teams:

- Audit for Inference: We identify exactly where AI models are misrepresenting your product or favoring competitors, and we rewrite the narrative.

- Generate Original “Ground Truth”: We conduct the primary research and data analysis that LLMs crave, turning your blog into a citation magnet.

- Engineer Semantic Authority: We don’t just add keywords; we structure your content’s “entities,” so AI engines can extract your product’s value as a factual certainty.

If you’re tired of seeing your competitors take the “AI Share of Voice” while your organic traffic stagnates, let’s talk. We’ll show you exactly how to move from being “searchable” to being “cited.”

Implementation Roadmap: Future-Proofing Your Content Strategy

Transitioning to an AI-first strategy doesn’t require an overnight overhaul. It requires a systematic shift in how you structure, verify, and publish your assets. For a SaaS team, the next 30 days should be focused on technical accessibility and high-intent “citation hunting.”

Step 1: The AI-Readiness Audit (Days 1-10)

Before creating new content, you must ensure your current site is “legible” to AI agents.

- llms.txt Implementation: Place a /llms.txt file at your root directory. This acts as a concise, markdown-formatted map of your most important URLs, integrations, and pricing data, specifically designed for LLM ingestions.

- Schema Validation: Use JSON-LD to implement SoftwareApplication, FAQPage, and HowTo schema. AI models use these structured blocks as a “cheat sheet” to verify your product’s features and pricing.

- Crawlability Check: Ensure your core product value is in plain HTML. If your best insights are trapped inside heavy JavaScript or un-indexed PDFs, they effectively don’t exist in the generative ecosystem.

Step 2: Semantic Gap Analysis (Days 11-20)

Identify where your competitors are being cited, and you aren’t.

- Prompt Testing: Manually query Gemini and Perplexity with high-intent prompts like “How does [Competitor A] compare to [Your Brand] for enterprise security?”

- Information Gain Refresh: Update your top 5 performing blog posts with unique data points, custom screenshots, or a “Founder’s Perspective” section. This adds the Information Gain that triggers a new citation.

Step 3: The “Citation Outreach” Cycle (Days 21-30)

AI models are heavily influenced by “Consensus Signals”, ” which are what other high-authority sites say about you.

- Review Aggregation: Focus on getting fresh, detailed reviews on G2, Capterra, and TrustRadius that mention specific technical features. LLMs scrape these to build “Consensus Summaries.”

- Partner Content: Publish a guest post or co-marketing piece on a high-authority domain (e.g., a technical partner like AWS or Stripe). A mention on these “seed sites” is worth more for AI visibility than 100 low-quality backlinks.

Also read: How to choose a SaaS content marketing agency in the AI era

The “Next 30 Days” SaaS Execution Checklist

- Launch /llms.txt to give AI agents a direct path to your key documentation.

- Update “Last Modified” tags in your Article schema to signal freshness.

- Identify 5 “Semantic Triples” for your core product features and embed them in your H2s.

- Schedule 3 customer interviews to extract unique “Experience” signals for your next research report.